The problem nobody is talking about

Ask ChatGPT a question about your industry. Go ahead, do it right now.

Does your website show up in the response? Does it get cited as a source?

For 90%+ of businesses, the answer is no. Your site is invisible. It doesn't exist in the AI search world. And that world is growing fast.

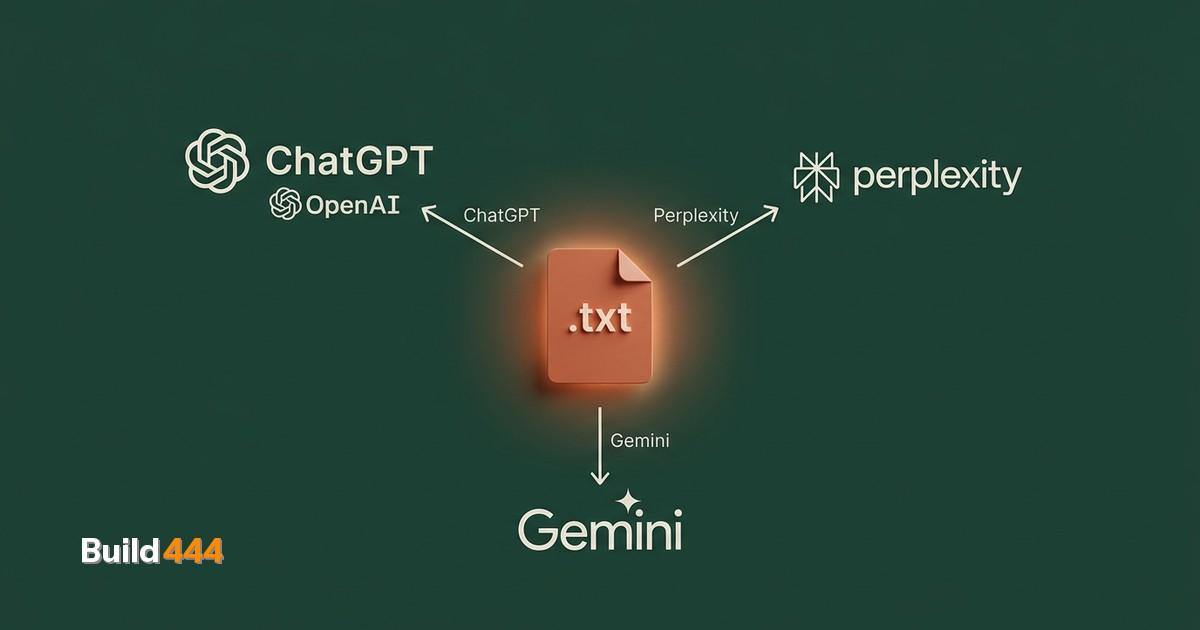

800M+ weekly active users on ChatGPT. 25M+ monthly users on Perplexity. 18-50% of Google queries now show AI Overviews. AI search is not experimental. It is mainstream.

ChatGPT has over 800 million weekly active users. Perplexity has passed 25 million monthly users. Google's AI Overviews now show up on anywhere from 18% to over 50% of search queries, depending on who's counting. These aren't experimental toys anymore. They're where people get answers.

Here's what makes this different from regular SEO: in traditional search, you at least show up on page 3 or page 7. You exist. In AI search, you either get cited or you don't. There's no page 2. You're visible or invisible. Binary.

"We're witnessing a transition from the 'ten blue links' model of search to what we might call 'direct answer engines' — systems that synthesize information from multiple sources to provide complete responses." — Rand Fishkin, Co-founder of SparkToro (Search Engine Land, 2025)

I've built an automated audit pipeline that checks AI search readiness across 10 categories. The patterns are clear. Most sites fail for the same handful of reasons, and most of those reasons are fixable in a weekend.

What AI search engines actually want

Forget everything you know about keyword density and backlink profiles for a second. AI search engines process your site differently than Google's traditional crawler.

They want to understand three things:

- What does this page actually say? Not what keywords it targets. What information it contains.

- Who wrote it, and why should anyone trust them? Author bios, credentials, company info.

- Is this content structured in a way I can parse? Headings, lists, schema markup, clear sections.

That's it. AI models are surprisingly good at understanding content quality, but they need help understanding context and trust.

Think about it from the AI's perspective. It's processing millions of pages to answer a single question. It needs to quickly determine: is this source reliable, is this information specific, and can I extract a clear answer from it?

If your page is a wall of text with no headings, no author, no structured data, and vague claims, the AI skips you. Not because your content is bad. Because it can't efficiently verify and cite you.

Is your website visible to AI?

Get your full site analysis →The 10-point AI visibility checklist

I've boiled this down to the things that actually move the needle. No theory. Just what works.

1. Don't block AI crawlers in robots.txt

This sounds obvious, but I see it constantly. Check your robots.txt right now. If you see any of these, you have a problem:

User-agent: GPTBot

Disallow: /

User-agent: ChatGPT-User

Disallow: /

User-agent: PerplexityBot

Disallow: /

Over 35% of the top 1,000 websites block GPTBot. Most do it by accident, not on purpose. Check your robots.txt.

Some sites do it intentionally. Most don't realize they're doing it with overly broad disallow rules.

Fix: Explicitly allow AI crawlers, or at minimum, don't block them.

2. Add FAQ schema to your top pages

FAQ schema is the single highest-impact change for AI visibility. When ChatGPT or Perplexity answers a question and your page has FAQ schema with that exact question, you're far more likely to get cited.

{

"@type": "FAQPage",

"mainEntity": [{

"@type": "Question",

"name": "How do I make my website visible to ChatGPT?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Add structured data, ensure AI crawlers can access your site..."

}

}]

}

Pick the 5 questions people actually ask about your business. Add FAQ schema for each one. This takes 30 minutes.

"GEO can boost source visibility in generative engine responses by up to 40%. Adding expert quotations increased visibility by 41%, while naive keyword stuffing actually reduced it by 10%." — Aggarwal et al., Princeton University, GEO: Generative Engine Optimization (KDD 2024)

3. Use proper heading hierarchy

AI models rely heavily on headings to understand content structure. Every page needs one H1. Sections under it get H2s. Sub-sections get H3s. Don't skip levels. Don't use headings for styling. (We check heading hierarchy and 46 other points in our full SEO audit checklist.)

This isn't new advice, but it matters more now. A well-structured page is 3x easier for an AI to parse and cite than a flat wall of text.

4. Add author and organization schema

E-E-A-T (Experience, Expertise, Authoritativeness, Trust) isn't just a Google thing anymore. AI models weight content differently based on who wrote it. We break down exactly how E-E-A-T signals affect ranking in a separate guide.

Add Person schema for authors. Add Organization schema for your company. Include credentials, experience, and links to other authoritative profiles (LinkedIn, industry publications).

5. Write long-form, specific content

Thin pages with 300 words don't get cited. Ever. AI search engines prefer comprehensive content that thoroughly covers a topic.

The sweet spot is 1,500 to 3,000 words per page on your core topics. Not fluff. Specific, detailed, opinionated content with real data points.

6. Include statistics and specific claims

"Our product is great" gets you nothing. "Our product reduced page load time by 43% across 200 client sites" gets cited.

AI models love specificity. Numbers, percentages, dates, named studies. Every specific claim is a potential citation anchor.

7. Implement OpenGraph and meta descriptions properly

When an AI does cite you, it pulls your OG title, description, and image to build the citation card. If these are missing or generic ("Welcome to our website"), you lose the click even when you win the citation.

Every page needs a unique, descriptive OG title and description. Not a keyword-stuffed mess. A clear statement of what the page covers.

8. Create a clear site architecture

AI crawlers follow your internal links just like Googlebot. If your best content is buried 5 clicks deep with no internal links pointing to it, it won't get indexed well.

Your most important content should be reachable within 2 clicks from your homepage. Use a logical URL structure. Link related content together.

9. Publish content that answers questions directly

Look at how ChatGPT responds: it answers questions. If your content is structured as clear answers to specific questions, you're aligned with how AI search works.

Start sections with the answer. Then explain. Don't bury the answer in paragraph 4 of a meandering introduction.

10. Keep content fresh

AI models learn from recent crawls. A page last updated in 2021 gets weighted less than one updated last month. Add dateModified to your schema. Actually update your content regularly.

This doesn't mean changing a comma. It means reviewing your content quarterly and updating facts, stats, and recommendations.

The three mistakes I see everywhere

Mistake 1: Blocking AI crawlers "for safety." Some site owners block GPTBot because they don't want AI "stealing" their content. I get the concern. But blocking crawlers doesn't prevent AI from learning about your content through other sources. It just prevents you from getting cited and getting traffic. You lose, the AI doesn't care.

Mistake 2: Having zero structured data. No schema, no FAQ markup, no author info. Your content might be brilliant, but you're making the AI do all the work to understand it. That's a competitive disadvantage when 50 other sites make it easy.

Mistake 3: Writing for keywords instead of questions. Traditional SEO trained us to think in keywords. AI search thinks in questions and answers. If your page title is "Best SEO Services Copenhagen 2026" instead of "How to choose an SEO agency in Copenhagen," you're optimizing for the wrong paradigm.

"Good SEO is good GEO." — Danny Sullivan, Public Liaison for Google Search (Search Engine Land, 2025)

Sullivan's point is worth paying attention to. The fundamentals of good SEO — clear structure, real expertise, helpful content — are the same things that get you cited by AI search engines.

How to check your AI visibility right now

Here's a 5-minute test:

- Open ChatGPT (or Perplexity, or Google with AI Overviews)

- Ask a question your website should answer

- Check if your site gets cited

- Try 5 different questions related to your business

- If you get zero citations, you have work to do

You can also check your server logs for GPTBot, ChatGPT-User, and PerplexityBot user agents. If they're not crawling you, the crawlers either can't find you or you're blocking them.

For a more thorough analysis, run a full AI readiness audit. Our SEO audit report includes a dedicated AI readiness category that checks all of this — schema, crawlers, heading structure, content specificity — scored and delivered as a PDF.

What to do next

Don't try to fix everything at once. Start with the biggest wins:

- Check and fix robots.txt (5 minutes)

- Add FAQ schema to your top 5 pages (2 hours)

- Add author schema to your blog (1 hour)

- Review and improve your top 10 pages for specificity (ongoing)

That's a weekend of work that puts you ahead of 90% of your competitors. Most businesses haven't even started thinking about AI search optimization. By the time they do, the early movers will already own the citations.

Free: 47-point SEO audit checklist

The same checklist we use on every client site. Download the PDF and audit your own site today.

Get the free checklist →